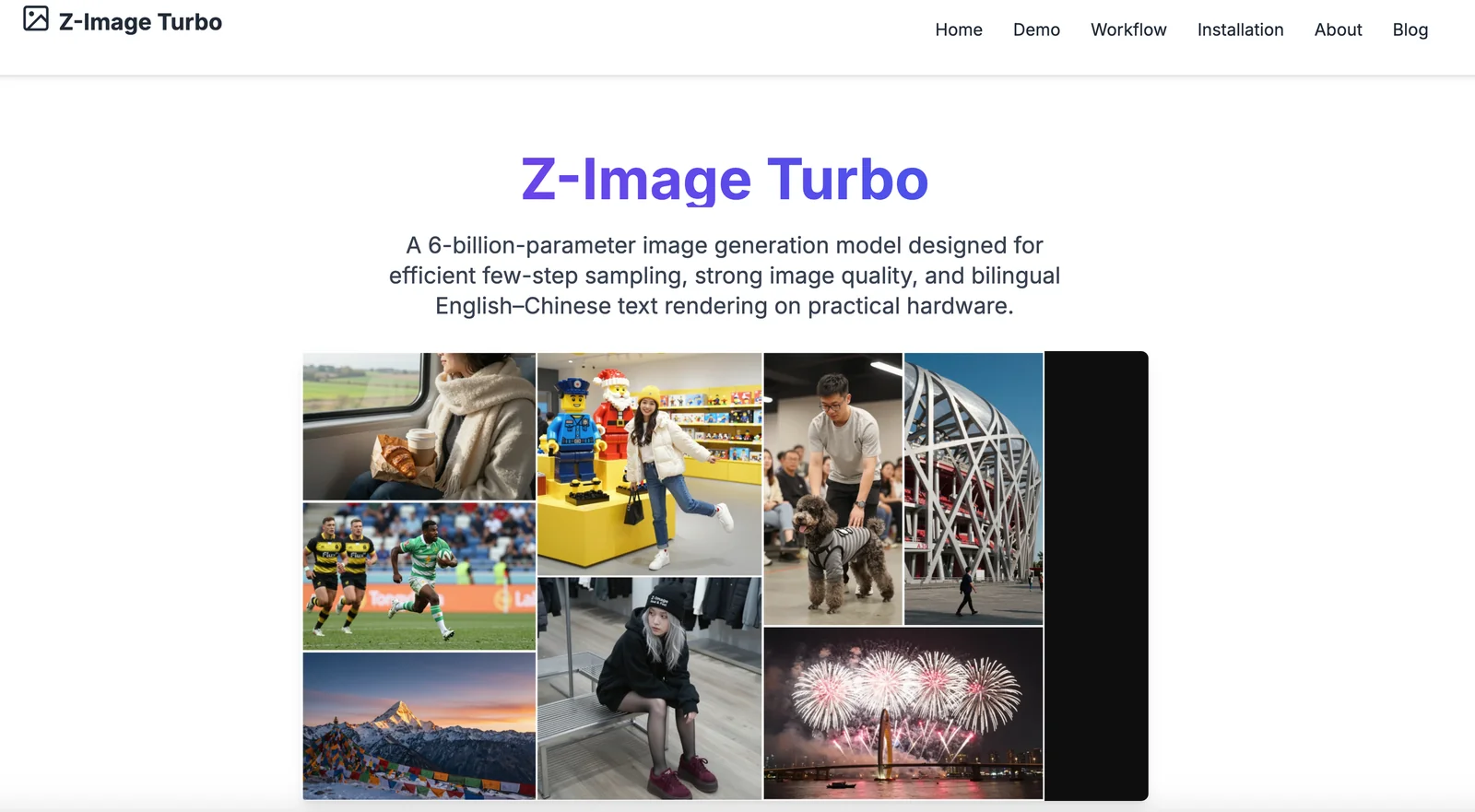

Z Image Turbo

AIOpen SourceFreeA 6B-parameter, efficient text-to-image model (Z-Image-Turbo) optimized for few-step sampling, photorealism, and English–Chinese text rendering.

Z Image Turbo

A 6B-parameter, efficient text-to-image model (Z-Image-Turbo) optimized for few-step sampling, photorealism, and English–Chinese text rendering.

About Z Image Turbo

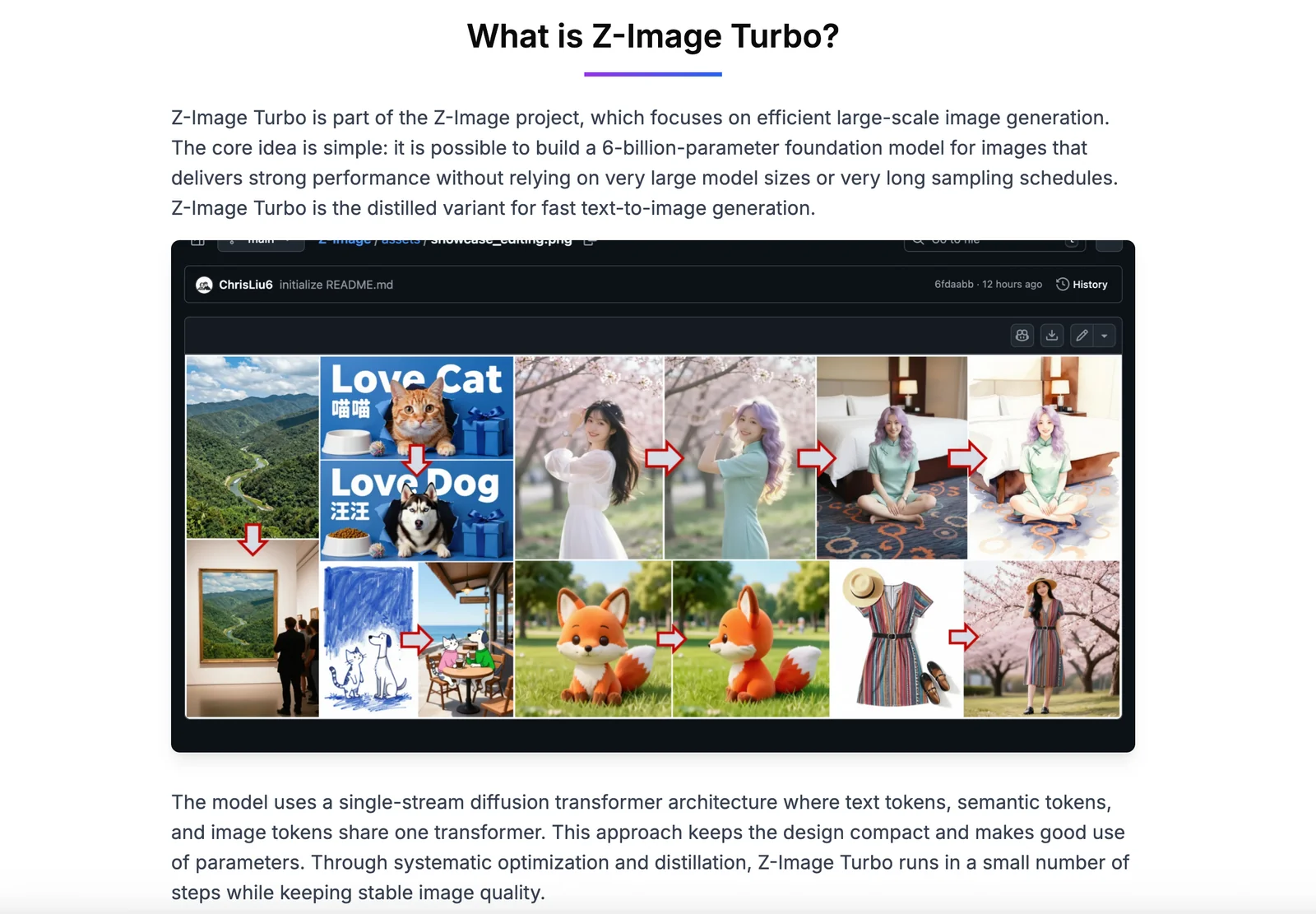

Z-Image-Turbo is a distilled 6B-parameter text-to-image foundation model built on a single-stream diffusion transformer (S3-DiT). It is engineered for very efficient few-step sampling (default 8 NFEs) to enable low-latency inference on enterprise GPUs and practical deployment on 16 GB consumer GPUs. The model emphasizes photorealistic image quality, robust instruction adherence, and accurate bilingual (English & Chinese) text rendering. Its stack integrates a Qwen 4B text encoder for conditioning, a Flux VAE, and training/distillation techniques (DMDR/DMD+RL) to compress capabilities into a fast inference model while supporting low-precision formats (bfloat16, FP8) and downstream integrations (Diffusers, ComfyUI, local MPS/CUDA pipelines).

Screenshots