Qwen3-Omni

AIOpen SourceFreeEnd-to-end omni-modal large language model that understands text, audio, images, and video and can generate real-time speech.

Qwen3-Omni

End-to-end omni-modal large language model that understands text, audio, images, and video and can generate real-time speech.

About Qwen3-Omni

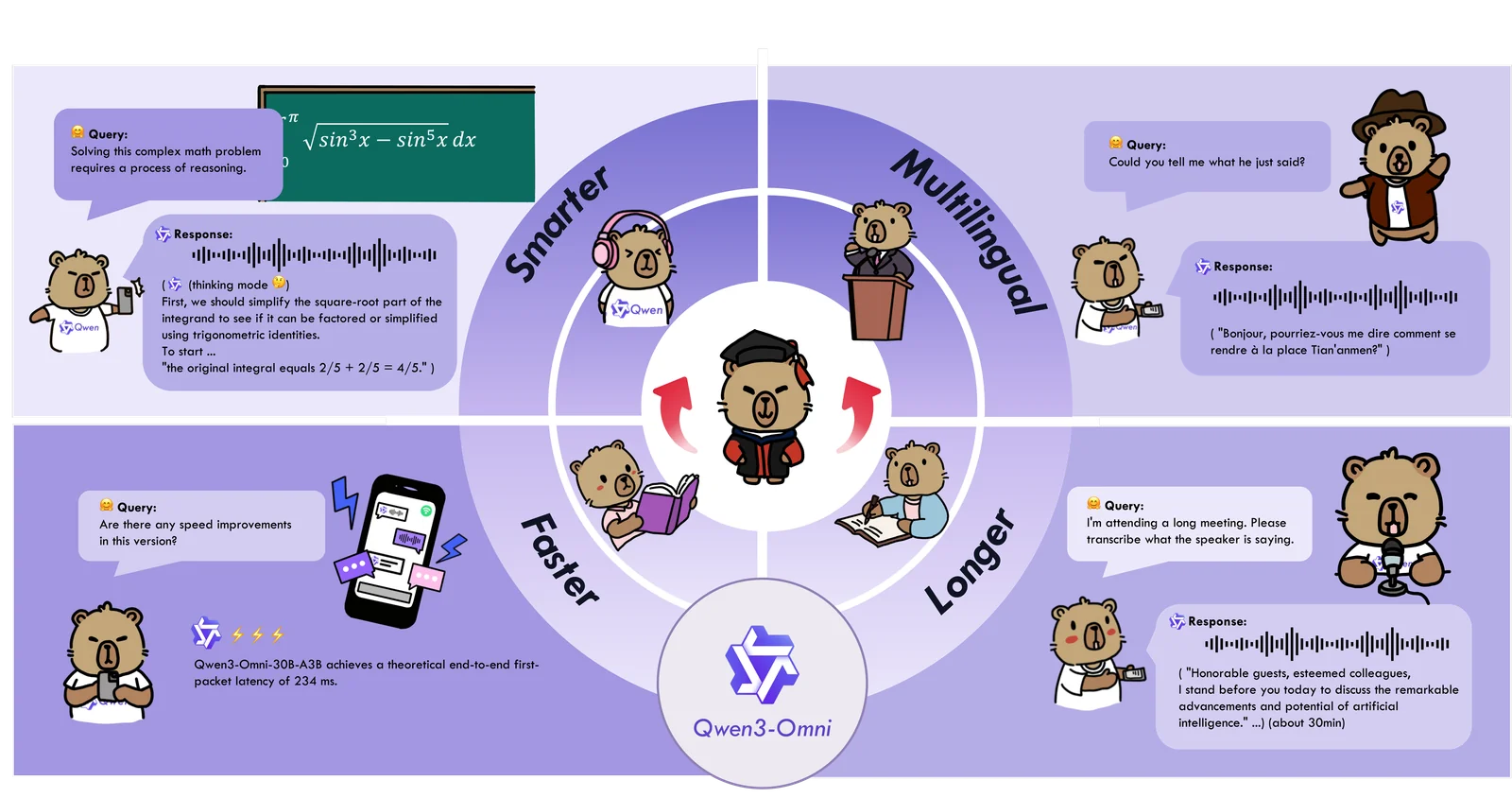

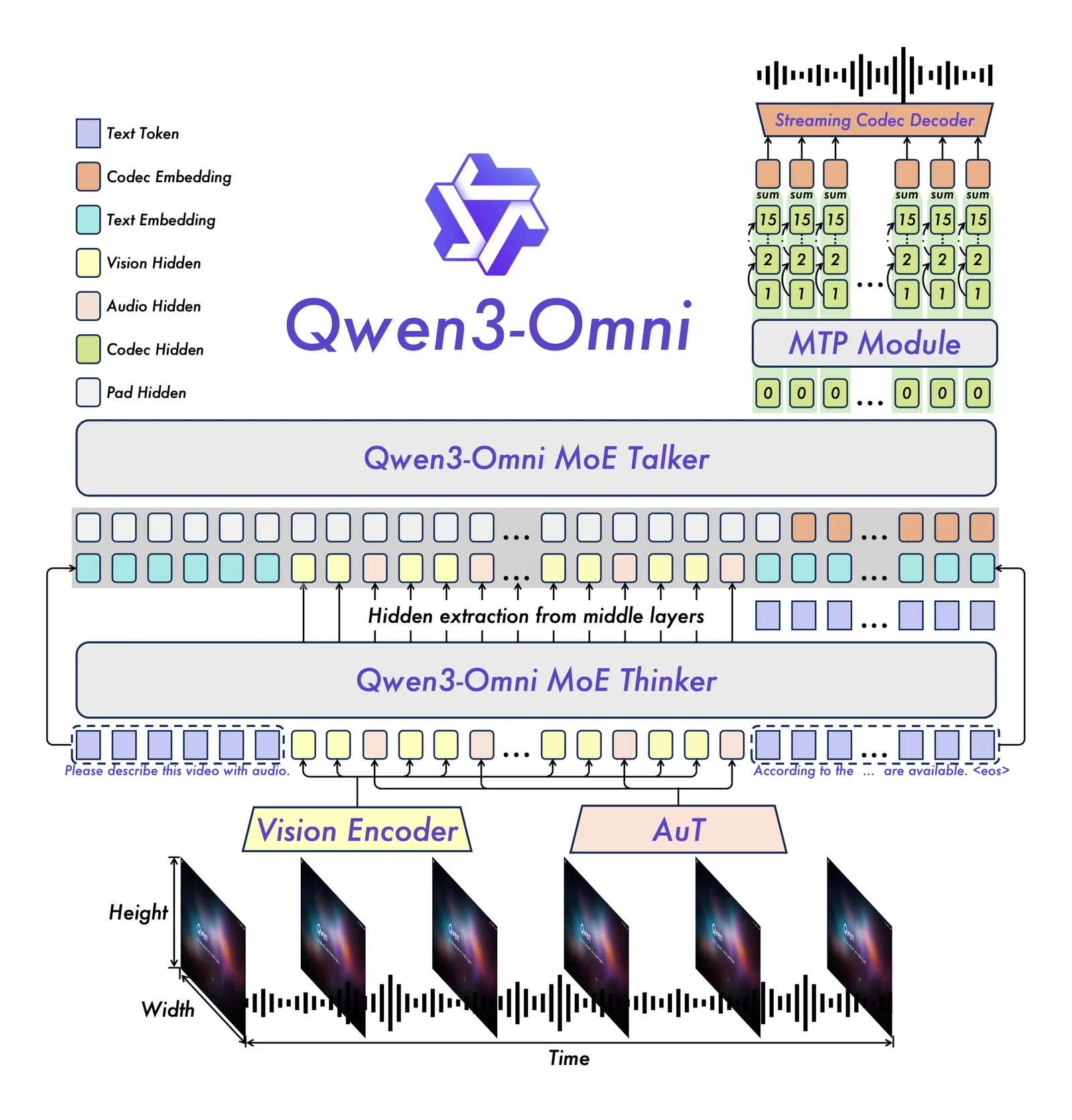

Qwen3-Omni is a natively end-to-end, omni-modal large language model developed by the Qwen team at Alibaba Cloud (QwenLM). It ingests and reasons over multiple input modalities — text, audio, images, and video — and can produce multimodal outputs including real-time speech. The project emphasizes low-latency, streaming interaction for audio/video conversations with natural turn-taking and immediate text or speech responses. Qwen3-Omni ships with specialized variants (e.g., Captioner, Instruct, Thinking) aimed at tasks such as detailed audio captioning and instruction following, and is published openly on GitHub to enable community use, inspection, and integration.

Screenshots