Ollama

AIOpen SourceA local-first runtime and tooling to run, manage, and integrate large language models on personal or self-hosted infrastructure.

Ollama

A local-first runtime and tooling to run, manage, and integrate large language models on personal or self-hosted infrastructure.

About Ollama

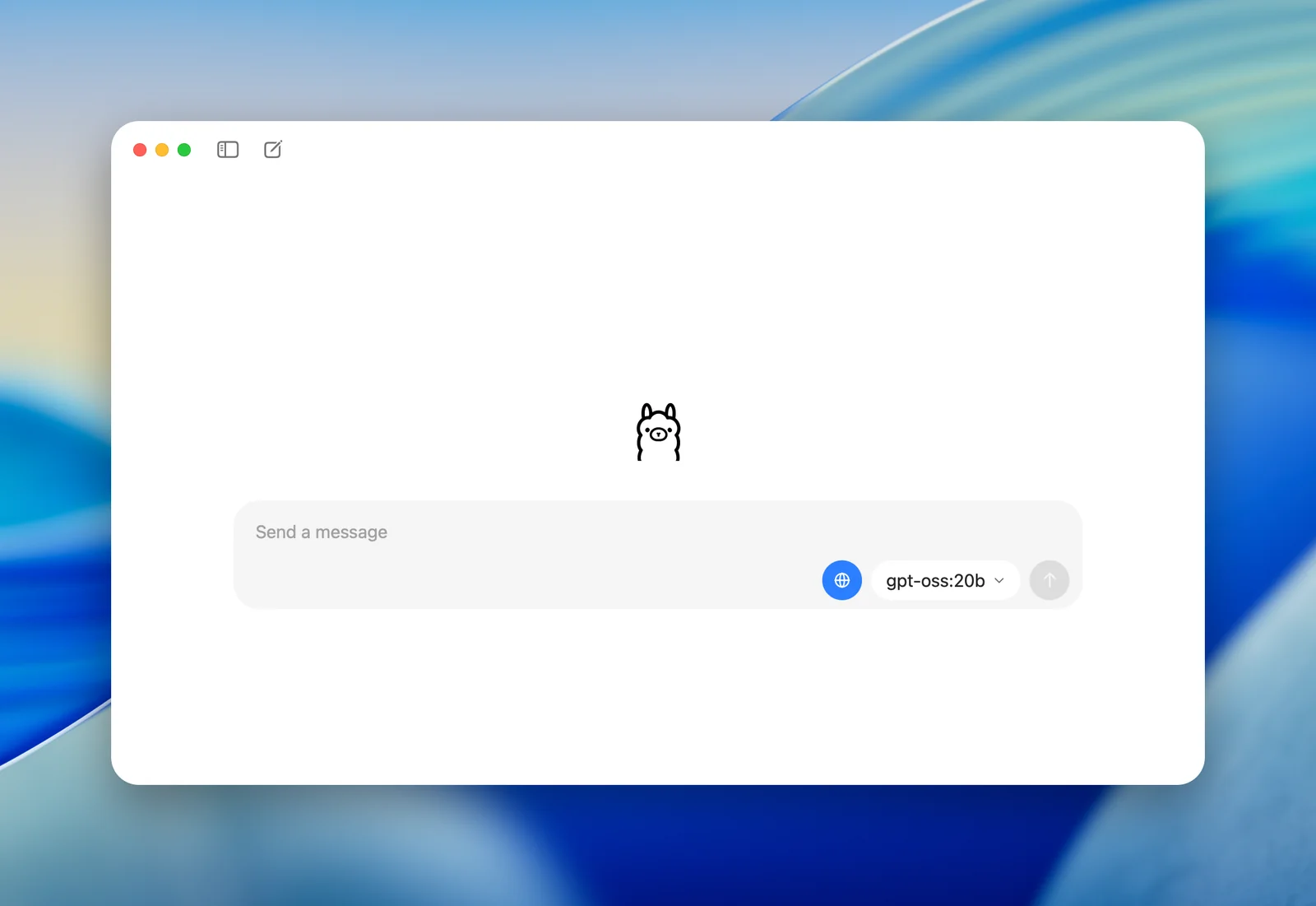

Ollama provides a lightweight, extensible runtime and developer tooling for running large language models locally or on your infrastructure. It exposes a simple API and CLI to create, run, and manage models, offers a library of pre-built models, and supports integrations via community SDKs and apps. Ollama also provides a web-search augmentation API to give models up-to-date information and reduce hallucinations, plus a cross-platform desktop client that connects to local or remote Ollama servers. Its value lies in enabling privacy-preserving, low-latency LLM usage with flexible deployment options and ecosystem integrations.

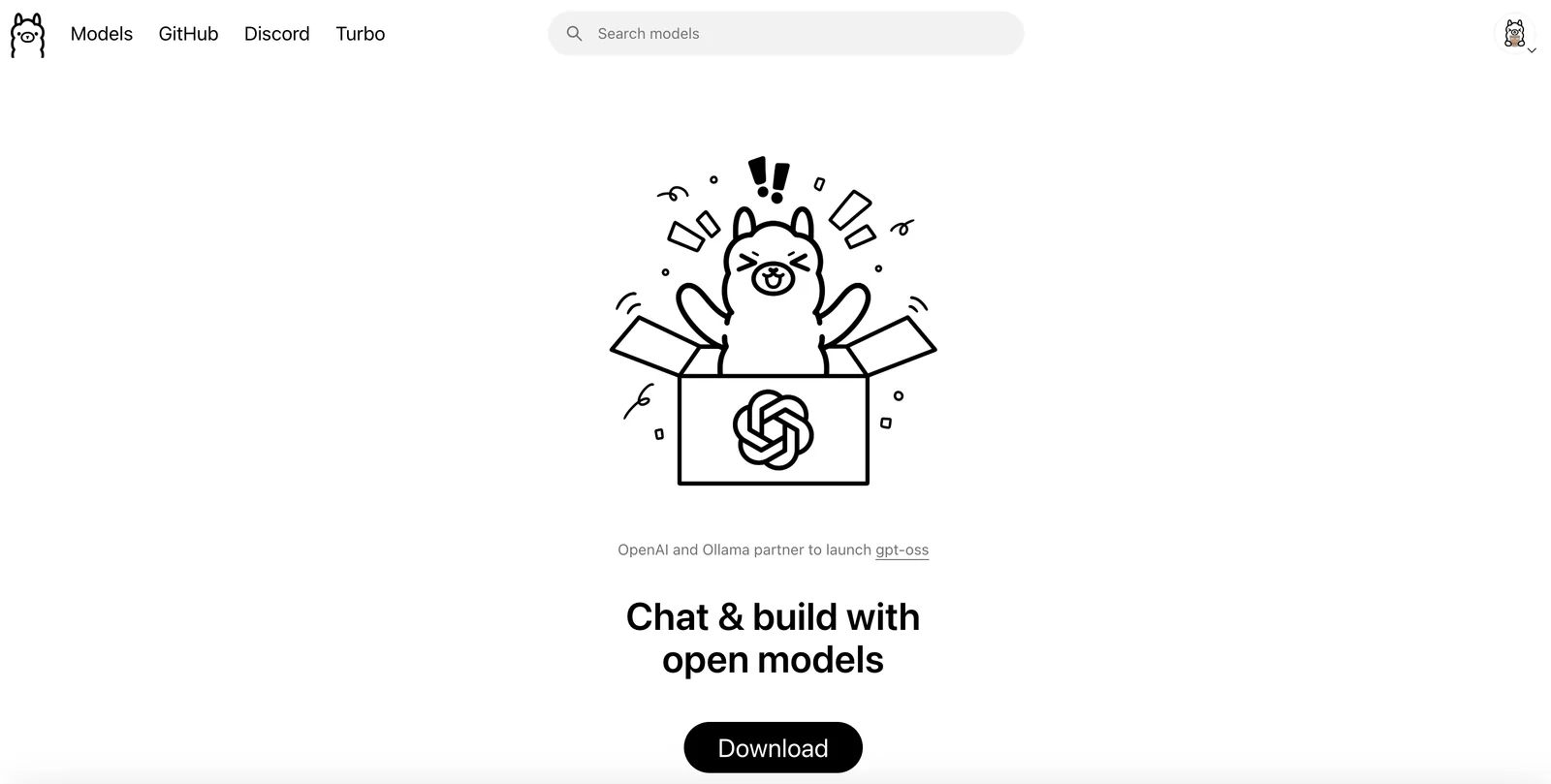

Screenshots