Mistral 3

AIFrontier family of multimodal, long-context language models offering scalable MoE and vision capabilities for enterprise assistants and agents.

Mistral 3

Frontier family of multimodal, long-context language models offering scalable MoE and vision capabilities for enterprise assistants and agents.

About Mistral 3

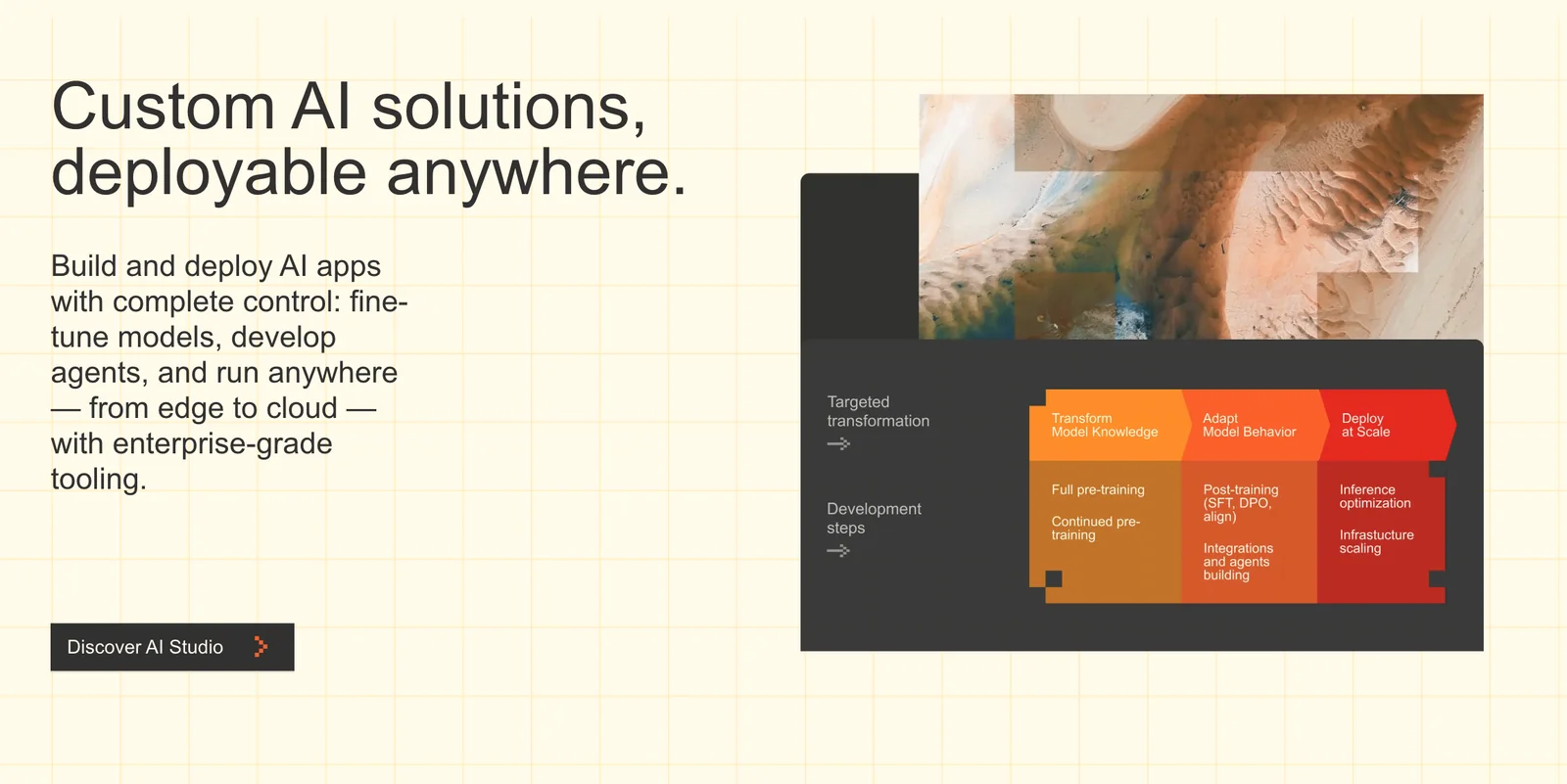

Mistral 3 is a family of frontier large language models from Mistral AI that deliver multimodal (text+vision) understanding, long-context reasoning, and scalable performance via a granular Mixture-of-Experts (MoE) architecture. The family includes smaller low-latency models (e.g., 24B-class Small 3.1 variants) and very large MoE models (Large 3 series with hundreds of billions of total parameters and tens of billions active), plus instruction-tuned and vision-enabled derivatives. Mistral 3 models target long-document understanding, coding and mathematical reasoning, multilingual tasks, and agentic/tool-using assistants; they are distributed with an ecosystem of inference, fine-tuning, and client libraries to enable on-premise or cloud deployments and enterprise integration.

Screenshots