Llama 4

AIOpen SourceFreeLlama 4 is Meta's multimodal mixture-of-experts foundation model series (Scout & Maverick) optimized for efficient, high-performance text and image understanding.

Llama 4

Llama 4 is Meta's multimodal mixture-of-experts foundation model series (Scout & Maverick) optimized for efficient, high-performance text and image understanding.

About Llama 4

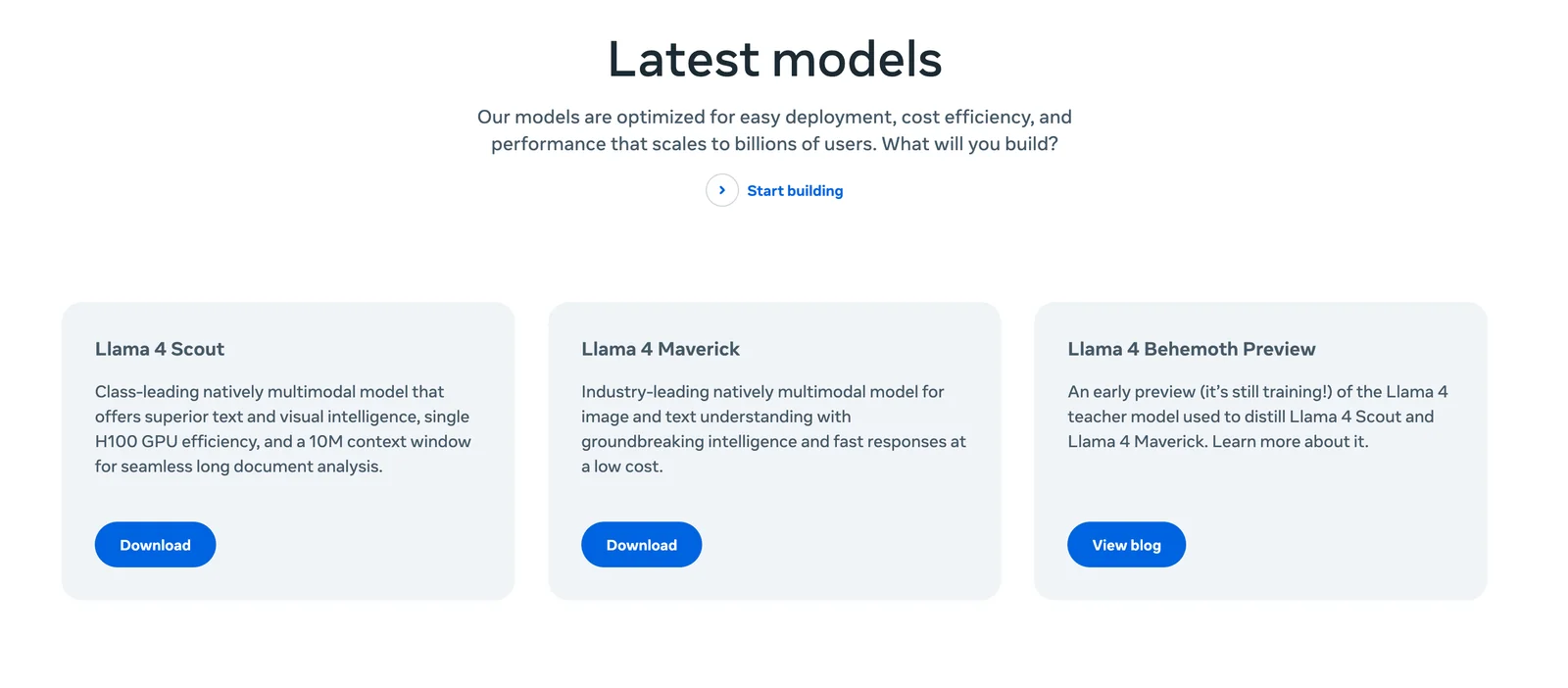

Llama 4 is a family of foundation models from Meta that provide native multimodality (text + images) using an auto-regressive, mixture-of-experts (MoE) architecture with early-fusion for vision. The release includes two efficient 17B-parameter base models — Llama 4 Scout (17B, 16 experts) and Llama 4 Maverick (17B, 128 experts) — which deliver effective large-capacity behavior (reported effective capacities such as 109B and 402B) while keeping inference compute and cost lower than dense alternatives. Llama 4 is offered in pretrained and instruction-tuned variants: pretrained models are adaptable for generation tasks, while instruction-tuned models are optimized for assistant-like chat, visual reasoning, captioning, and image question-answering. The distribution includes model weights, training and inference code, and fine-tuning utilities under Meta's licensing, and the models are intended for both commercial and research use with deployment requiring multi-GPU setups or supported cloud/providers.

Screenshots