HuggingFace Gaia 2

AIOpen SourceFreeGaia2 is an open benchmark and evaluation suite of 800 dynamic scenarios for studying and comparing generalist agent capabilities.

HuggingFace Gaia 2

Gaia2 is an open benchmark and evaluation suite of 800 dynamic scenarios for studying and comparing generalist agent capabilities.

About HuggingFace Gaia 2

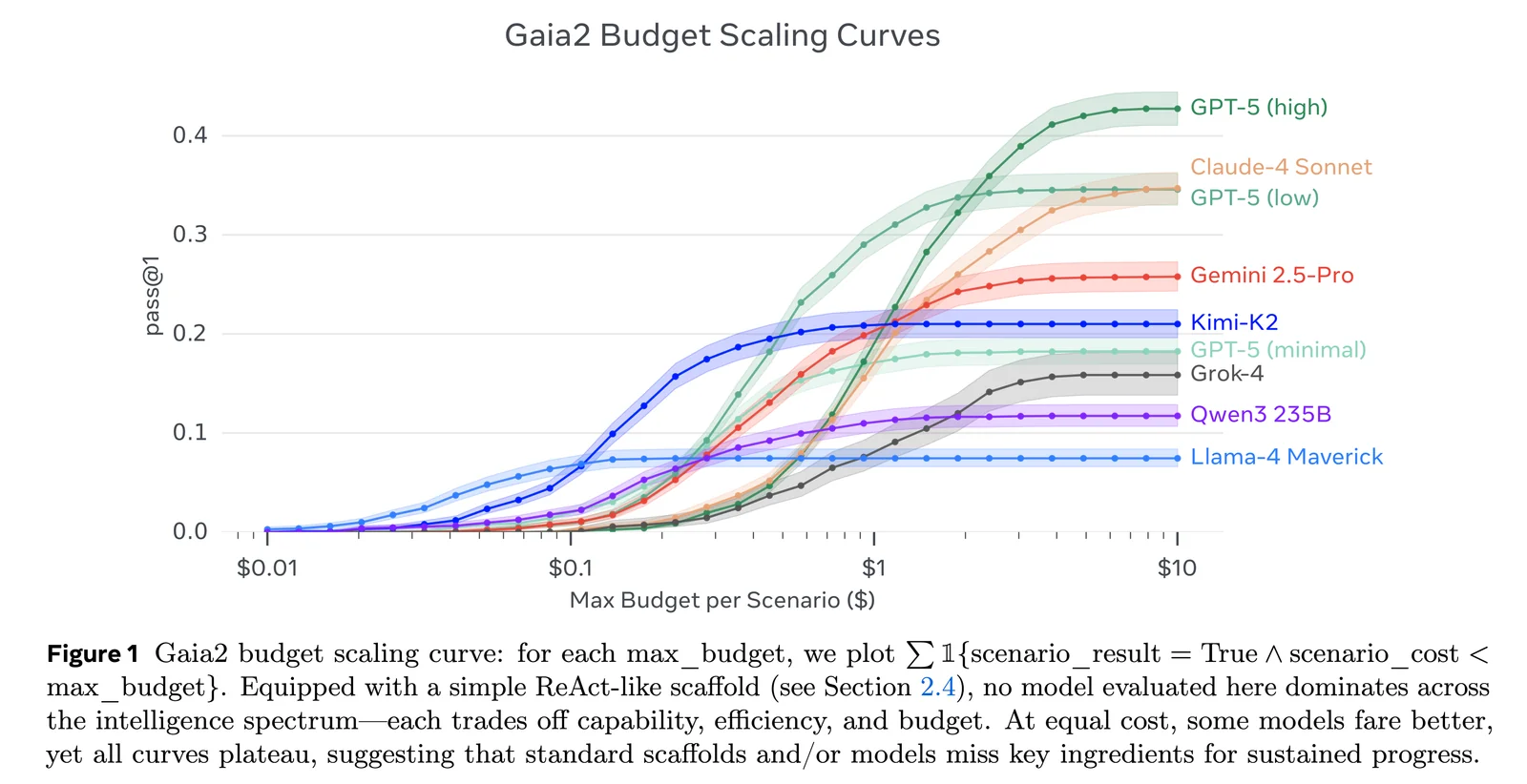

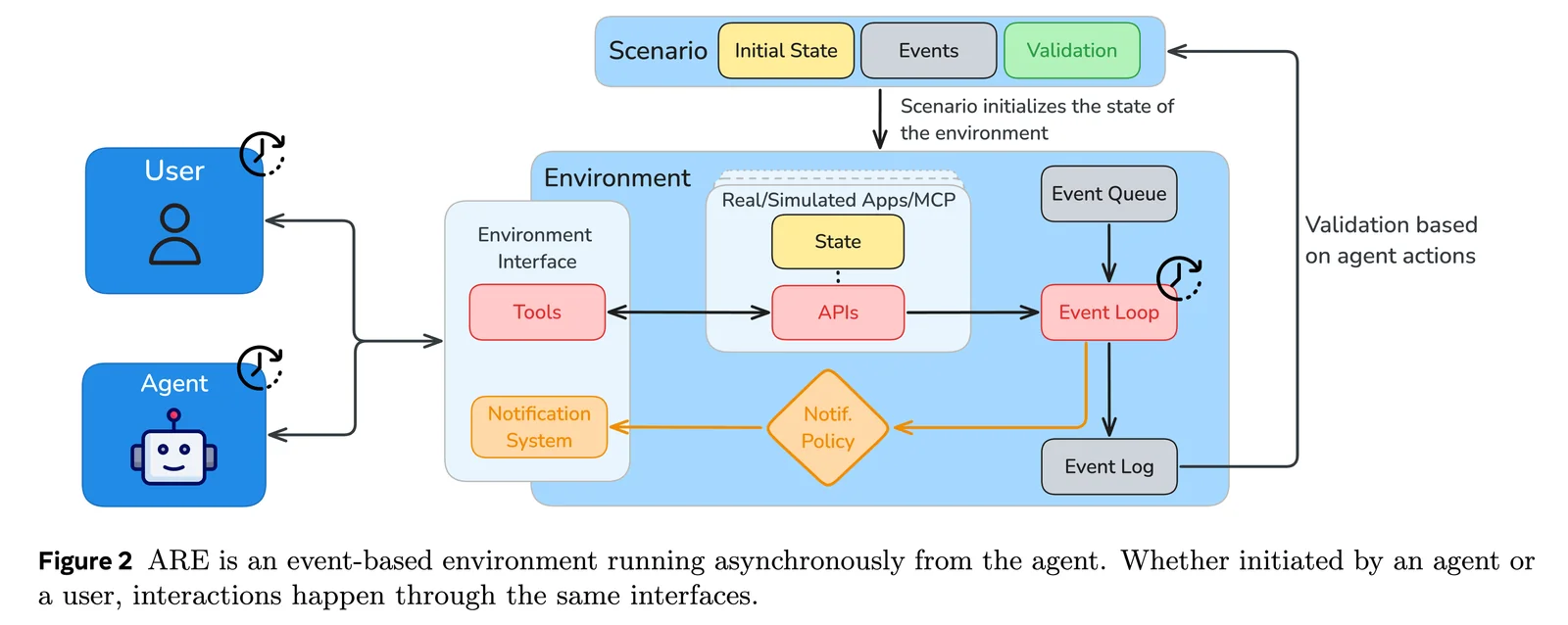

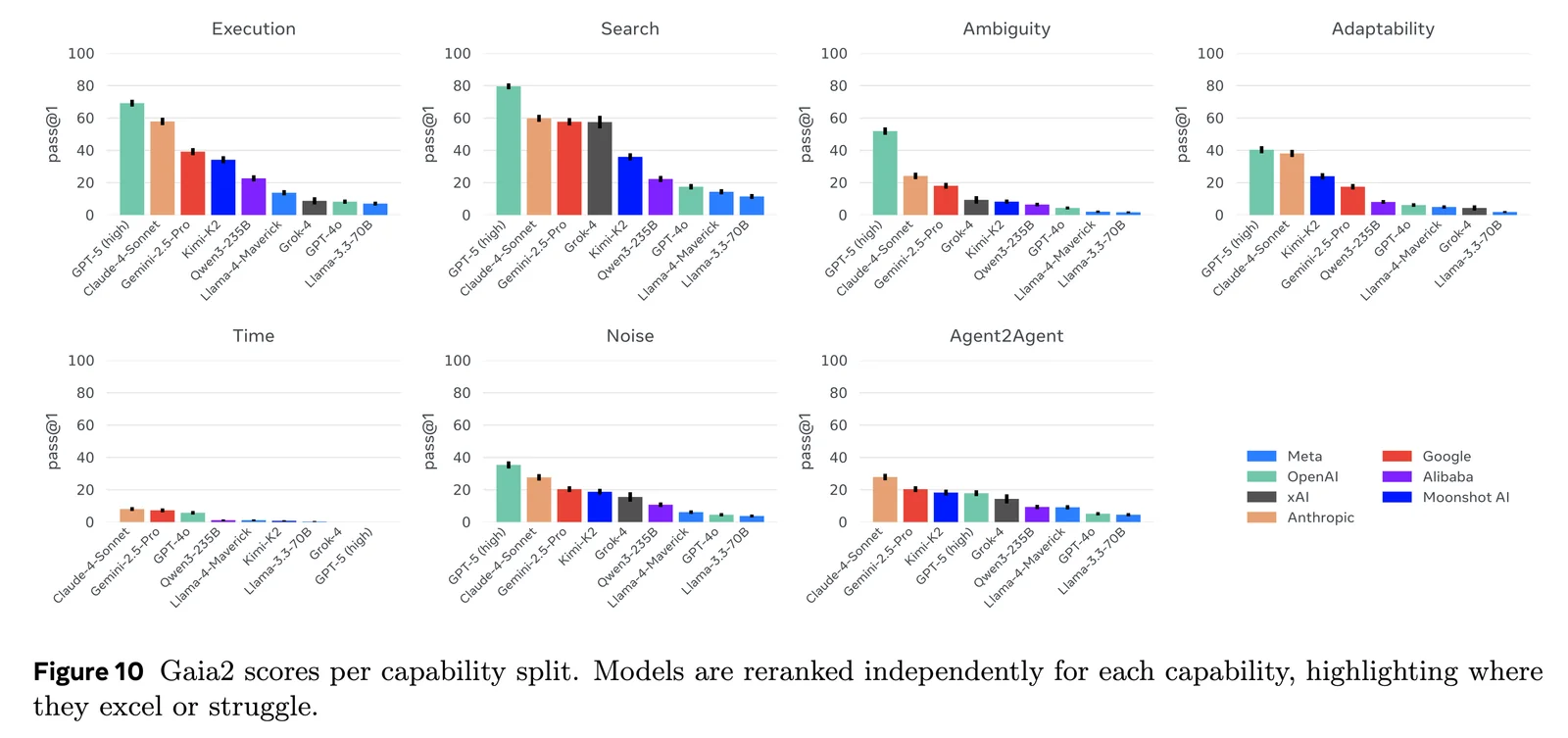

Gaia2 is a large-scale benchmark and dataset designed to evaluate generalist AI agents across multi-step, multi-tool, and multi-modal tasks. Hosted and integrated with Hugging Face and the ARE (Agent Research Environments) toolkit from Meta Research, Gaia2 provides 800 dynamic scenarios spanning multiple universes and capability configurations (execution, search, adaptability, time, ambiguity). The benchmark runs multi-phase evaluations (standard, Agent2Agent, and noise), forces multiple runs per scenario for variance analysis, and produces submission-ready traces for automated leaderboard scoring. Gaia2’s value lies in reproducible, community-driven evaluation workflows, CLI/SDK integration (are-run, are-benchmark), and a public leaderboard for comparing agent systems and research approaches.

Screenshots