Groq

AIHigh-performance inference platform delivering fast, low-cost model inference via the Groq LPU and developer tooling.

Groq

High-performance inference platform delivering fast, low-cost model inference via the Groq LPU and developer tooling.

About Groq

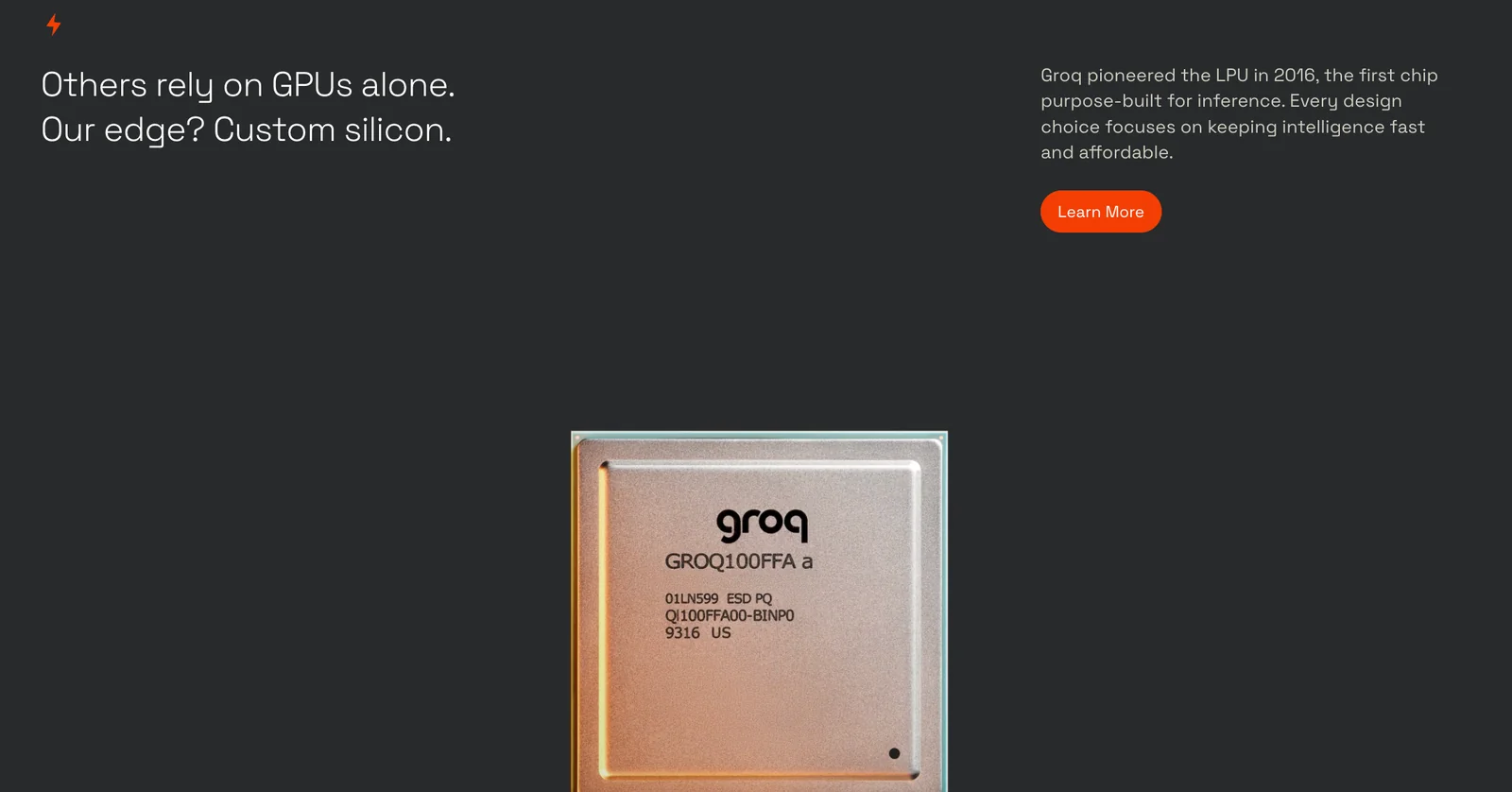

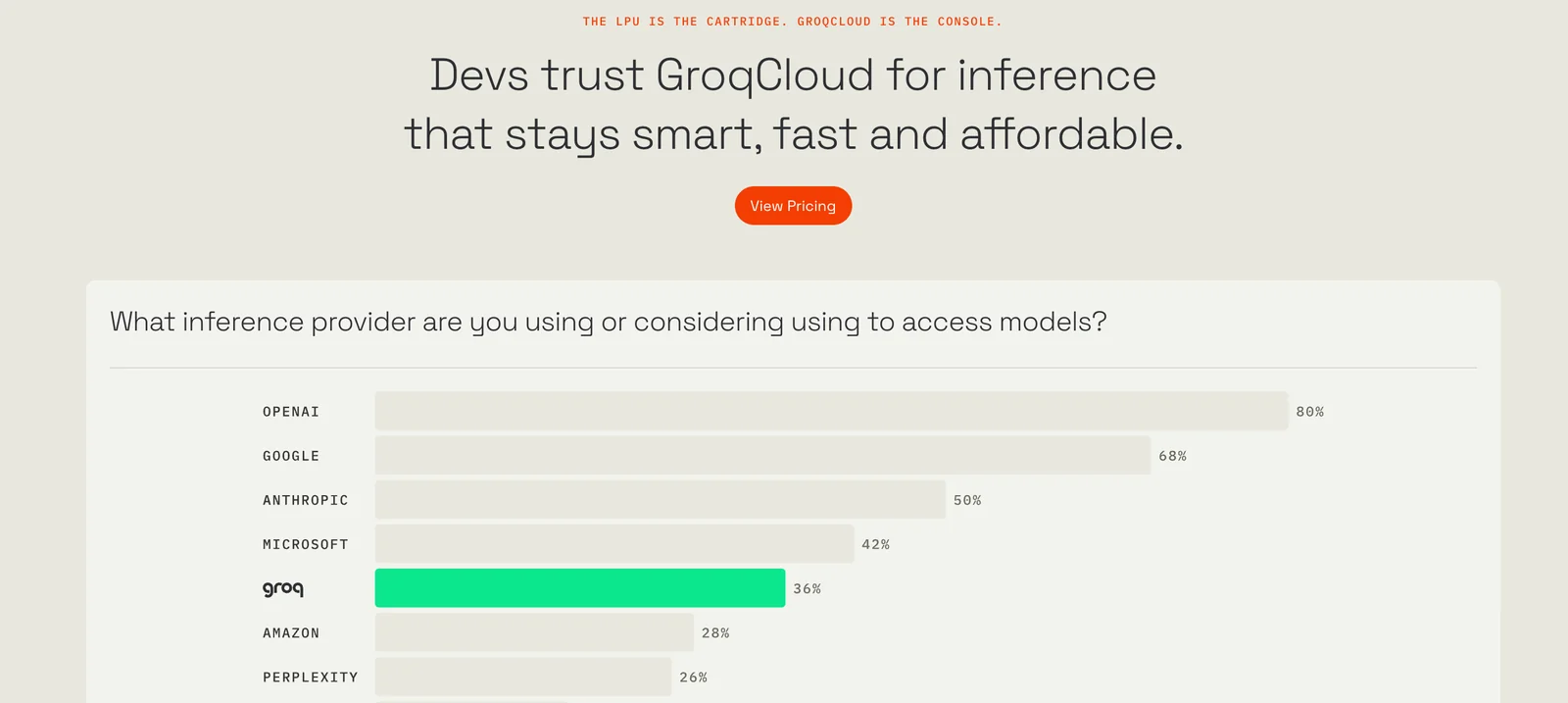

Groq provides a hardware-software platform centered on the Groq Language Processing Unit (LPU) that delivers low-latency, cost-efficient inference for machine learning workloads. The company supplies a complete stack including the GroqFlow compiler and toolchain to convert ML and linear-algebra workloads into Groq programs, SDKs (including an official Python client), REST APIs, and integrations (e.g., Gradio) for rapid application deployment. Groq's offering targets production inference, high-performance computing, and multi-modal model hosting by combining specialized hardware (GroqChip/LPU) with developer-facing tooling to optimize throughput, determinism, and operational cost.

Screenshots