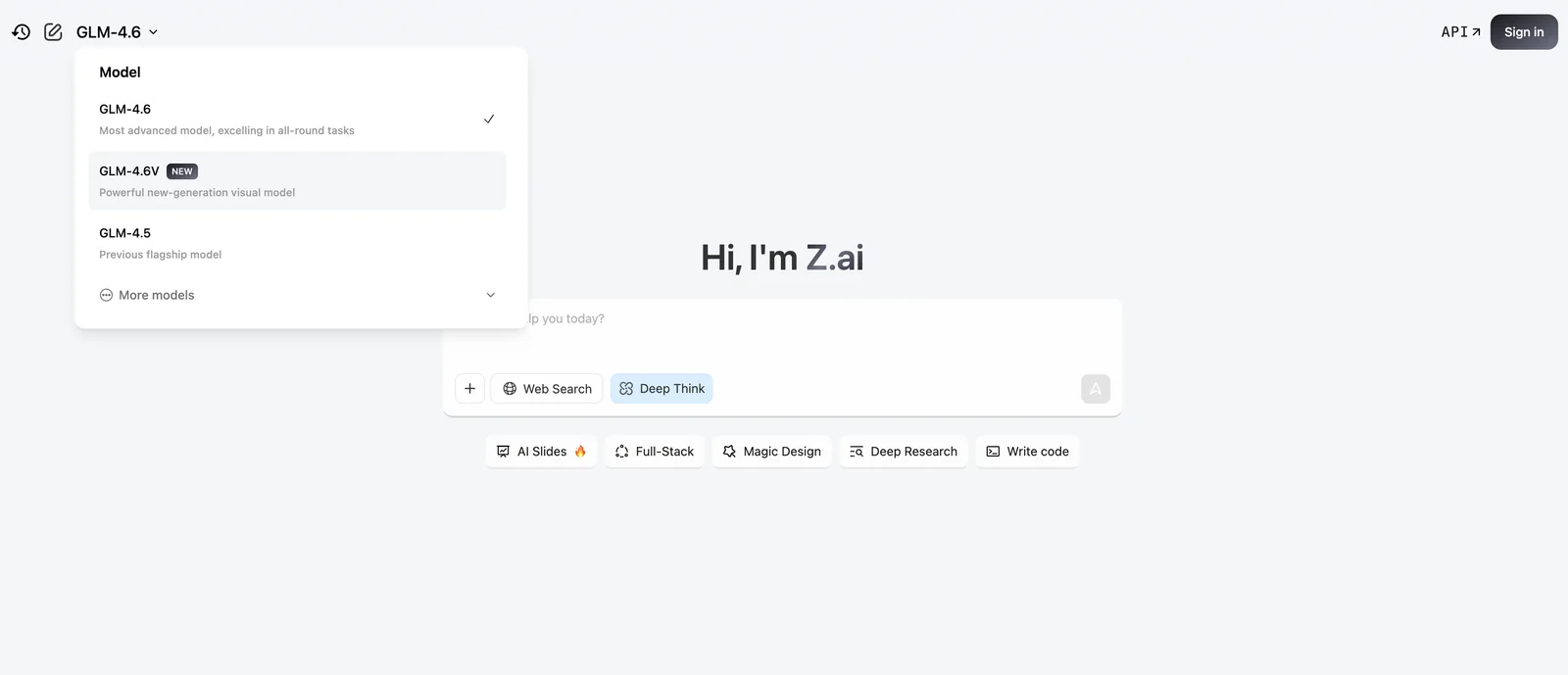

GLM-4.6V

AIOpen SourceFreeMultimodal foundation model (106B) with 128K-token context, native function-calling, and a 9B Flash variant optimized for local deployment.

GLM-4.6V

Multimodal foundation model (106B) with 128K-token context, native function-calling, and a 9B Flash variant optimized for local deployment.

About GLM-4.6V

GLM-4.6V is a multimodal foundation model family released by zai-org (Z.ai) that combines large-scale language understanding with advanced visual and document perception. The flagship GLM-4.6V (≈106B) is designed for cloud and high-performance cluster inference and is trained with a 128K-token context window to handle extremely long, multi-document inputs. A lightweight GLM-4.6V-Flash (≈9–10B) variant is provided for low-latency, local deployment and supports multiple quantized formats (GGUF variants) to reduce memory and compute requirements. GLM-4.6V introduces native Function Calling / tool-calling capabilities and interleaved image-text content generation, enabling agents to retrieve tools, call APIs, and synthesize coherent mixed-media outputs from documents, images, tables, and charts.

Screenshots