deepseek

AIOpen SourceFreeOpen-source family of large language and multimodal models (DeepSeek-V3, R1, VL, Coder) focused on efficient MoE scaling and RL-driven reasoning.

deepseek

Open-source family of large language and multimodal models (DeepSeek-V3, R1, VL, Coder) focused on efficient MoE scaling and RL-driven reasoning.

About deepseek

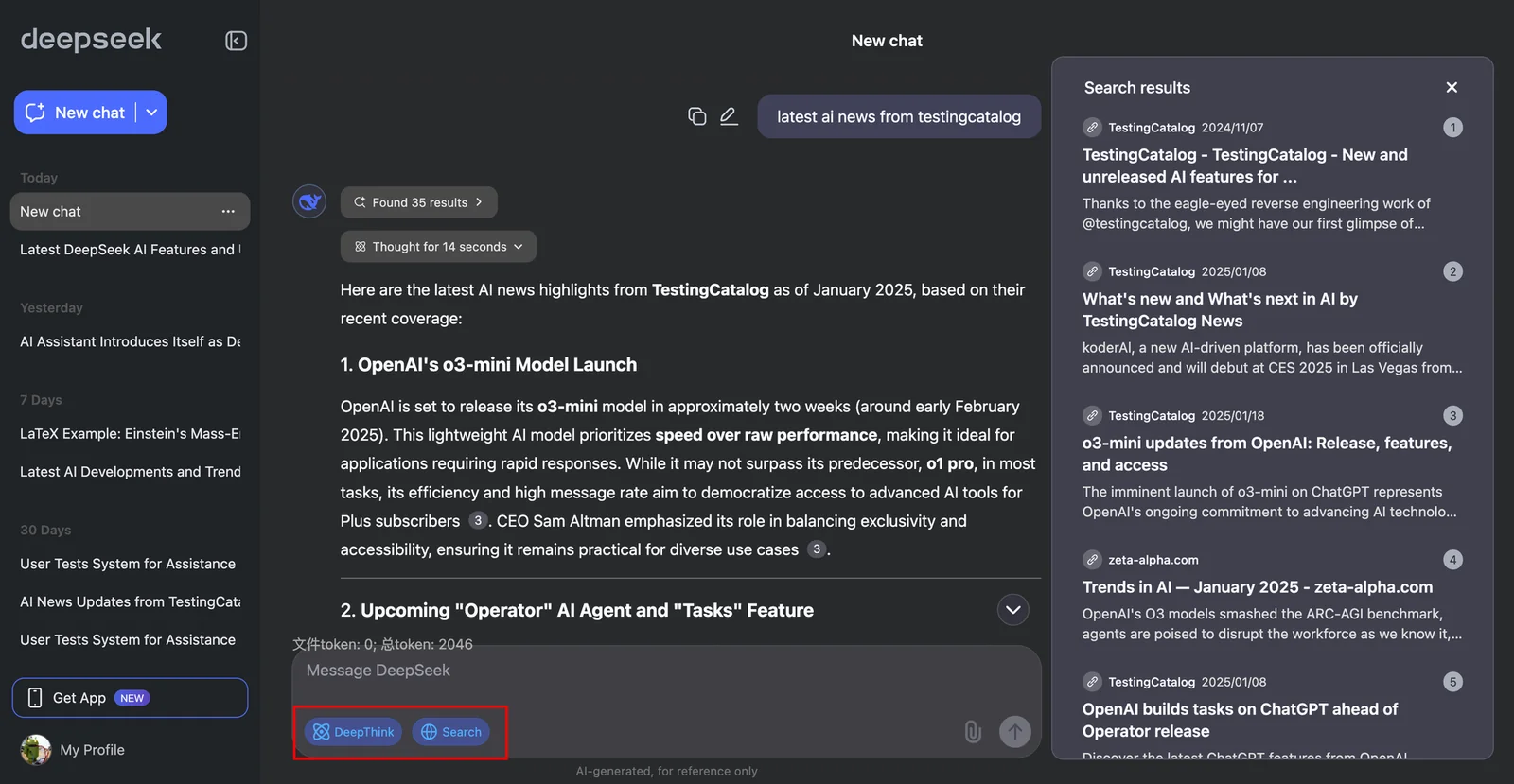

DeepSeek is a research-driven suite of open-source large models and multimodal systems that includes language models (DeepSeek-V3), reasoning-focused models (DeepSeek-R1 series), code-specialized models (DeepSeek-Coder-V2) and vision-language models (DeepSeek-VL). Architecturally it leverages Mixture-of-Experts (MoE) designs and Multi-head Latent Attention (MLA) to reduce inference cost while scaling to hundreds of billions of parameters. Training includes massive pretraining (reported 14.8T tokens for V3), supervised fine-tuning and reinforcement learning stages; DeepSeek-R1 explores large-scale RL (including an RL-only variant, R1-Zero) to drive emergent chain-of-thought and self-reflection behaviors. Unique values are: open-source releases of high-performance MoE models, RL-first reasoning research, long-context and code-focused variants, and multimodal capabilities for diagrams, formulas and web pages.

Screenshots