Comet

AIEnd-to-end model evaluation platform for AI developers, offering LLM evaluation, experiment tracking, and production monitoring.

Comet

End-to-end model evaluation platform for AI developers, offering LLM evaluation, experiment tracking, and production monitoring.

About Comet

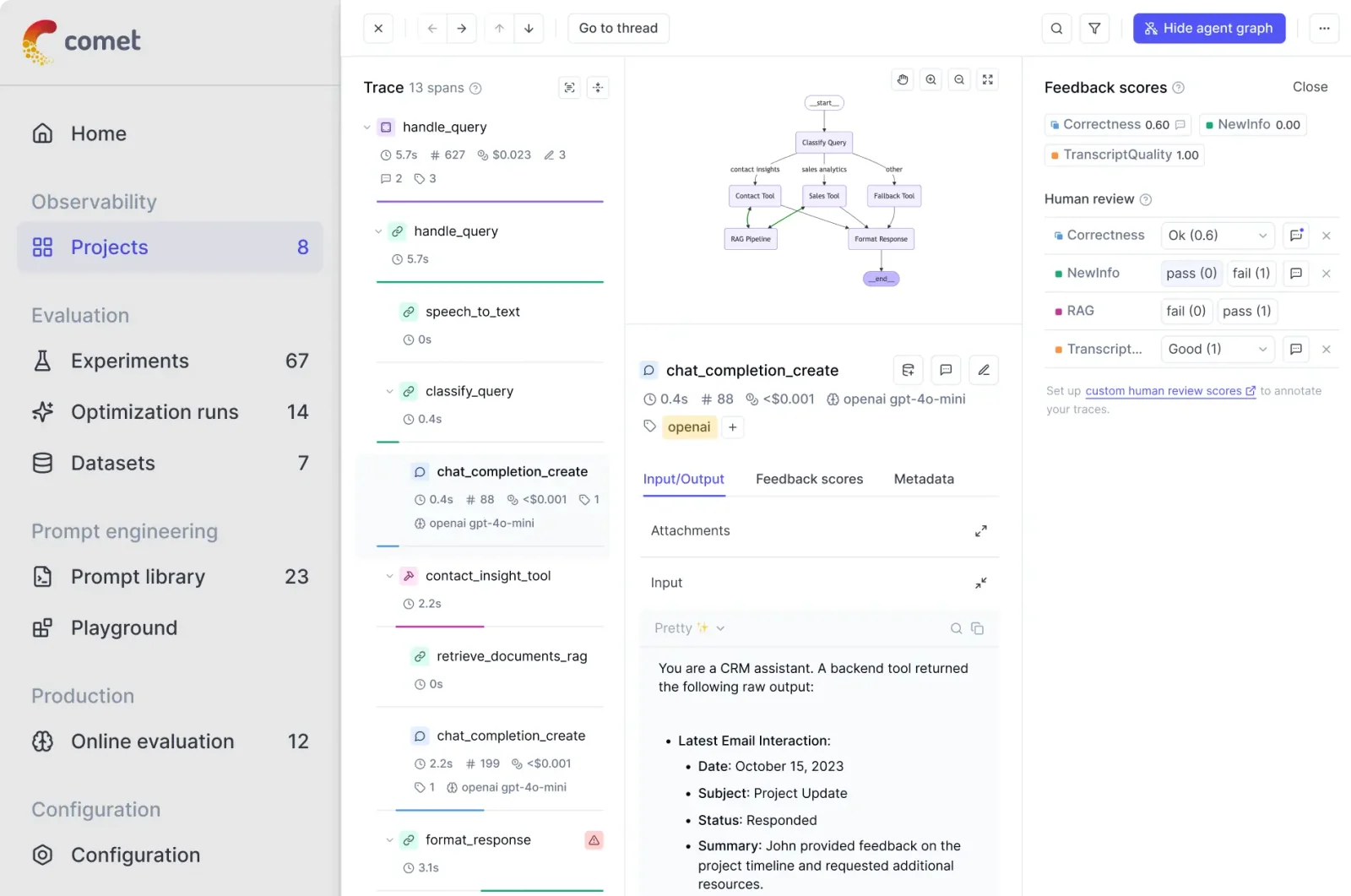

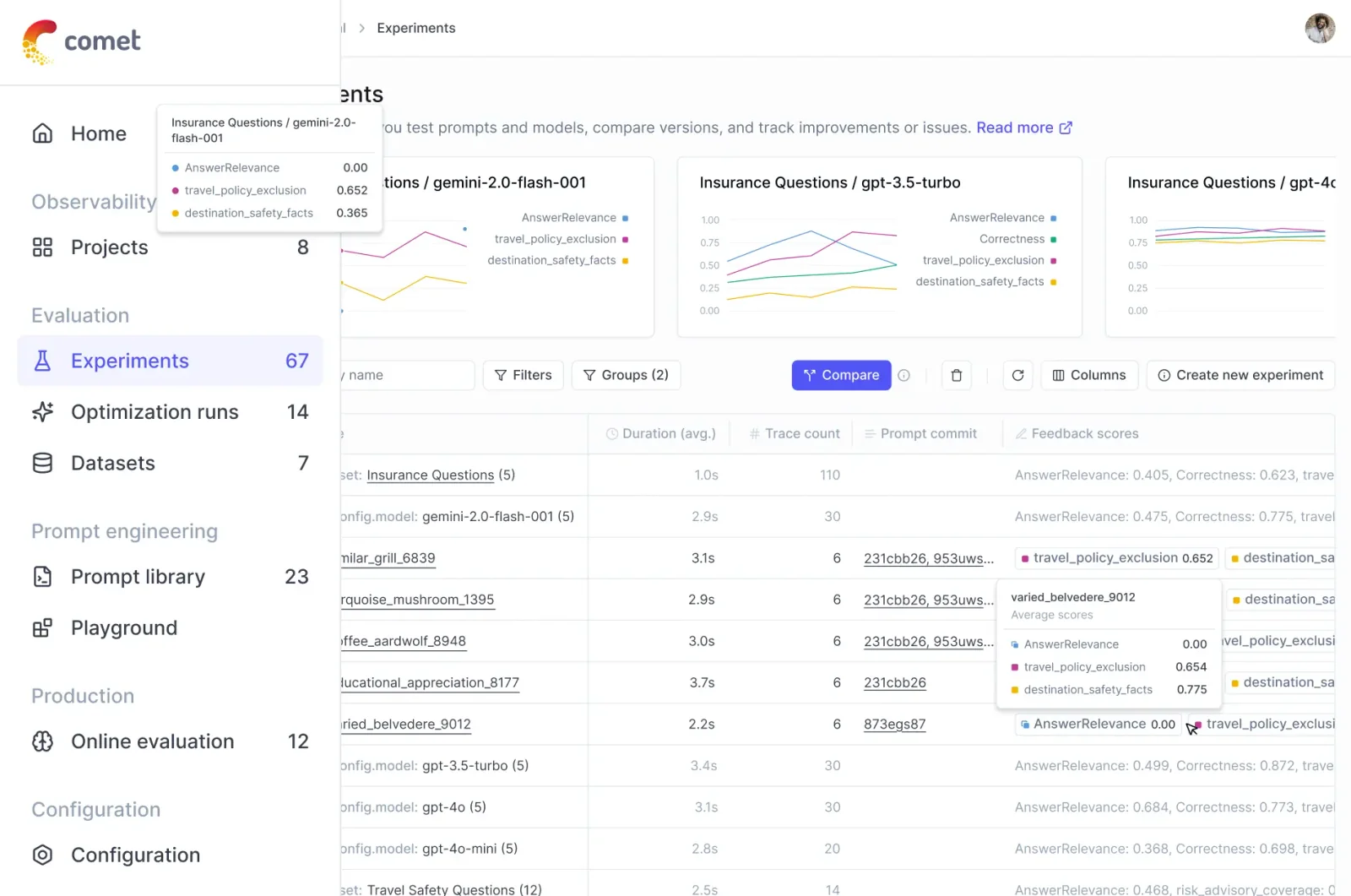

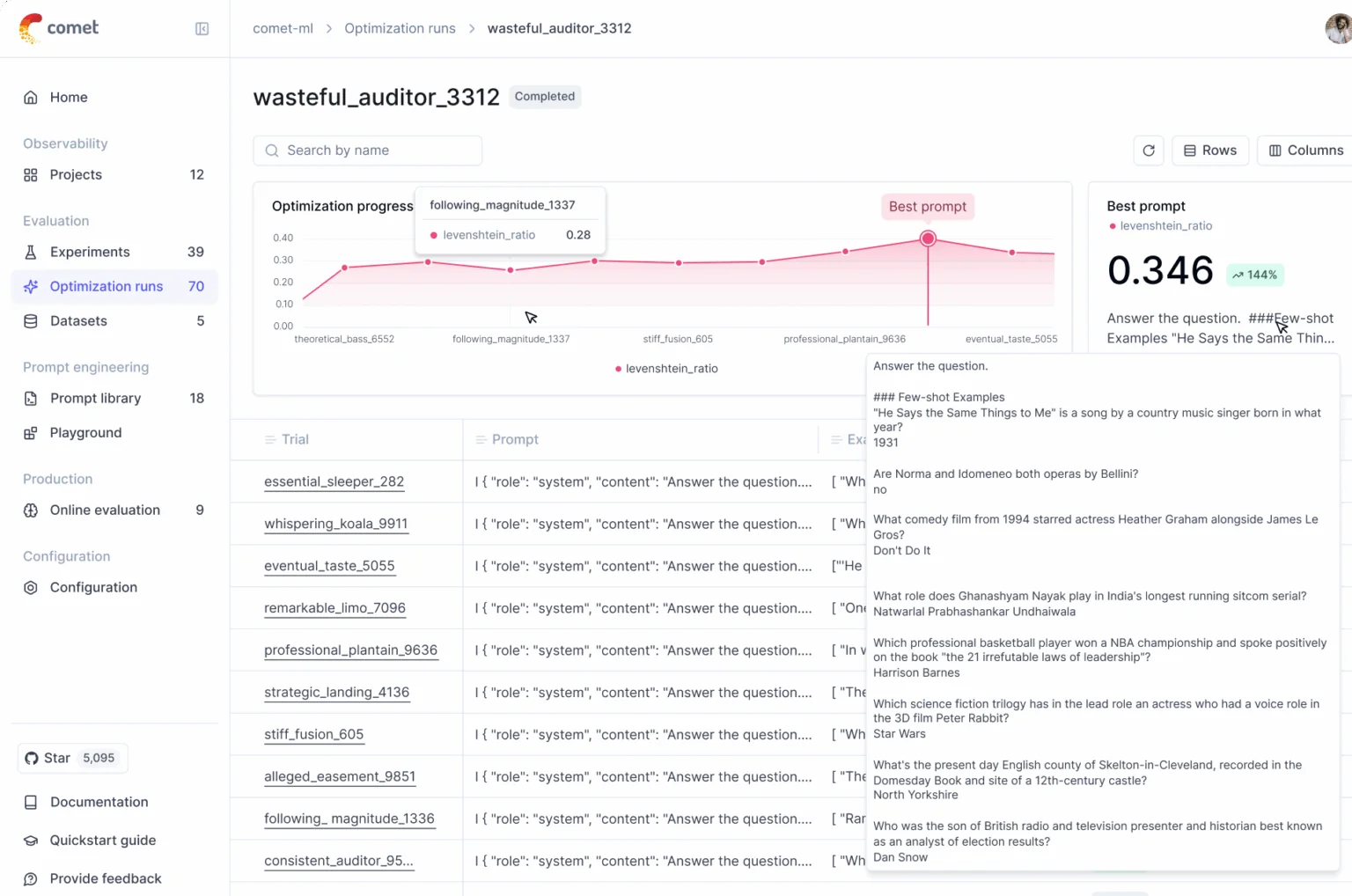

Comet is a developer platform that enables teams to evaluate, track, and monitor machine learning models throughout the ML lifecycle. It provides best-in-class evaluation tooling for large language models (LLMs), experiment tracking to capture runs, hyperparameters, metrics and artifacts, and production monitoring to detect regressions and performance drift. Comet centralizes model evaluation results and observability data so engineers and researchers can compare experiments, reproduce results, and maintain model quality in production.

Screenshots